Making AI content recommendations persuasive

Persuasive TV: Making content recommendation more persuasive!

Date: July-August, 2019 (2 months)

Role: Solo UX designer (no other member)

Keyword: User research, Psychological concept-based approach

Category: Research & UX

Brief: This project dig into how recommendation can be more persuasive by user research and psychological concepts. Based on the results, UX ideas are proposed with some rationales

Intro

‘Why all of a sudden do you recommend these movies?’

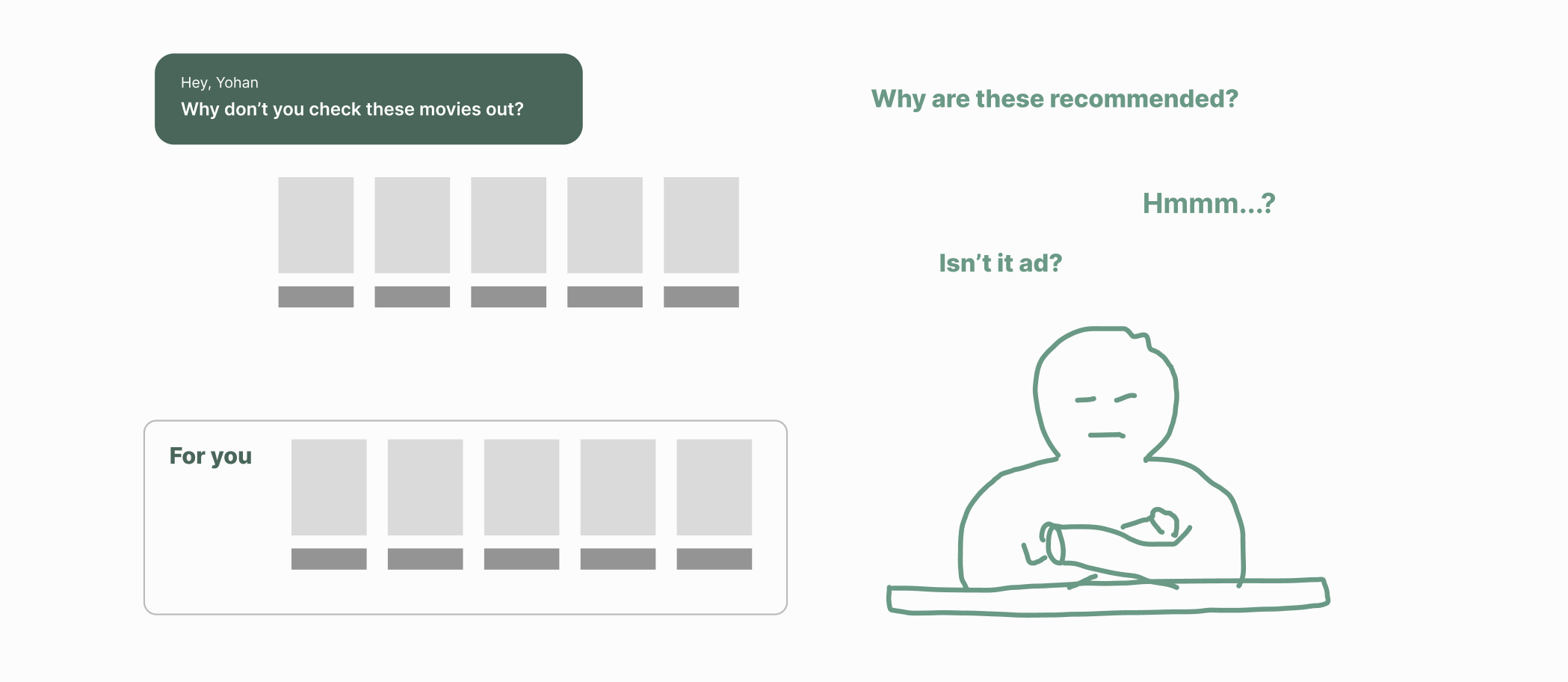

Many OTT or online TV streaming services such as Netflix or Hulu have recommendation features. In most cases, they don’t have enough explanation of why it’s my recommendation list in detail. They just put a recommendation list in the middle of their content exploration scene with short wording such as ‘Top picks for you’. A user doesn’t see any reason or logic there. These current ways of recommendation are quite far from something persuasive. This will make the ‘Recommendation’ feature meaningless/worthless in the end, although the recommendation is quite an important part of AI-connected services. This project is about how to make a recommendation UX more persuasive for content platform services.

Through this project, I want you to notice

1) that I initiated this project and it became an (internally)official one,

2) how I checked the validity of the research and get direction through survey analysis,

3) that I conceptualized the situation so we can think logically with somewhat academic concepts,

4) how I approached the solution through psychological bias

Steps

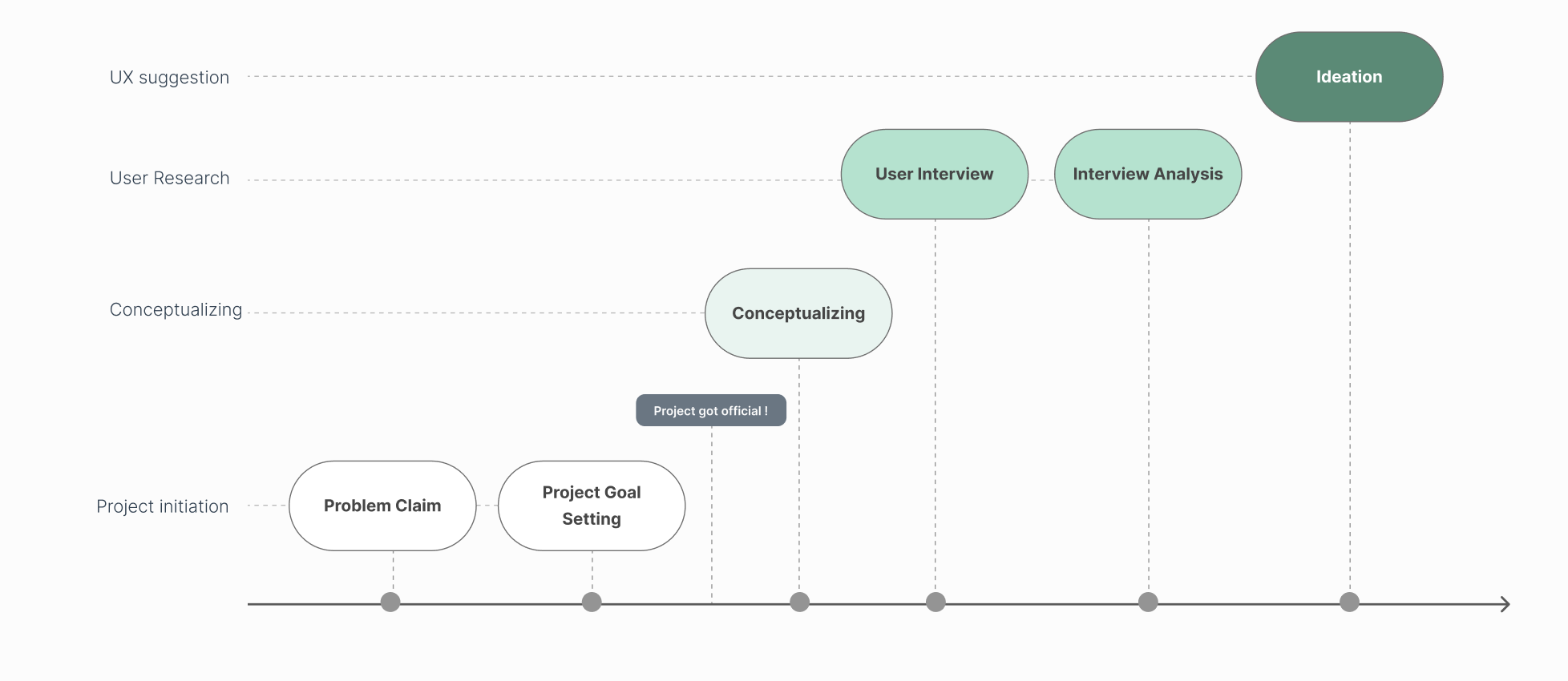

This project was done in relatively simple steps from ‘Problem claim’ to research, to ideation. Although I hoped to conduct a rigorous iterative process, I'm acknowledging that we missed the real user testing and iterative improvement at the end due to the team situation.

Problem Claim

“Isn’t it a random promotion list?”

“Are they what I want?”

Recommendation from TV is not really persuasive.

-

This project got started by my claim of dissatisfaction with recommendation UX after a few projects related to AI assistant-connected service.

I was primarily involved in new product development projects in that days. The new products we worked on were mostly related to having AI-assistant in TV. We were talking about many topics of AI assistants, and one of the core values of it was how to make a recommendation UX.

Although a recommendation was technically getting developed and accurate, UX was still the same as the old one, which is just having a list in the middle of the content exploration scene.

When we persuade somebody to do something, we prepare good reasons why he/she better do it, instead of just saying a single sentence ‘would you do~?’.

Compared to this human communication, the current recommendation UX didn’t look persuasive at all. It is just suddenly throwing a bunch of recommendations without any reason. It doesn’t look valid or elaborated proposal at all. This sometimes makes me feel like, ‘How dare you give me a recommendation like this? isn’t it a random list?’ If it were human communication, giving a recommendation without a good reason can be even a bit rude.

So I raised this issue to colleagues and the UX team leaders,

saying we’d better explore this UX more so we can apply a new recommendation UX to new products in the future. They agreed with the importance of doing this but were not sure how to proceed. So I started thinking of making it a real project.

Project Goal Setting

To make this an officially authorized project, I clarified what’s goal of this project and its expected effect such as…what’s good for our company & team, what we want to make, and what we would do with the results. With these goals, I gave a presentation to team leaders. And the project proposal is passed, and became an official project in the company!

External Goal

Providing a guide/becoming reference for overall our recommendation UX in the future which was expected to b an era of AI assistant-connected service

Internal Goal

Building content recommendation UI that can lead to the bigger persuasive power

Expected Effect

- Upgrading current product competency

- Having initiative on design discussion with clients

Conceptualizing

Keywords: Recommendation, Persuasion, Heuristic-systematic information processing, Involvement, Persuasion strategy

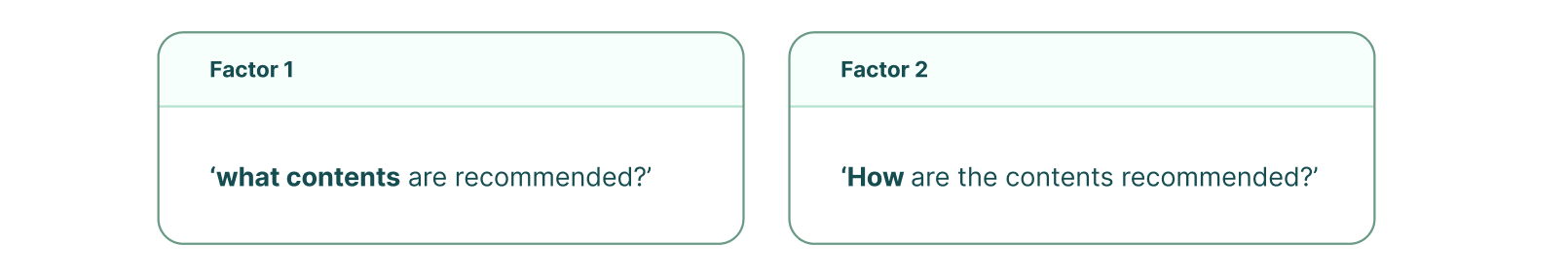

2 factors in the recommendation

I thought there are 2 factors in the recommendation as below: “What contents are recommended?” and “How are the contents recommended?”

Factor 1: What contents?

In other words, it’s about how well the contents are sorted for the user’s taste. and, This is not a factor UX can get into. The recommendation content is just curated by algorithm, or maybe the content curator or operation team… and they just put content there. And, the simple recommendation list is given to users.

Factor 2: How it’s recommended?

This is the factor UX can get involved in.

“How to recommend something?“ = “How to persuade something?”

There might be various ways to persuade something. For example, many advertisements have different persuasion strategies appealing to logical thinking comparing products spec, or appealing to emotion. Sometimes just showing the object is enough if it’s really looking good or meaningful. In the OTT / IPTV service, it seems the current recommendation is just showing the content, not really making another effort to persuade that the recommended content are worth-watch.

Then, how can something become more persuasive? What can be a persuasion way?

Recommendation as a persuasion

What is a recommendation? When users don’t have specific contents to watch in their minds, a recommendation helps them to find something interesting to watch. In other words, it's making a user think something is good, so in the end, the user chooses that one. In this perspective, it is a kind of persuasion.

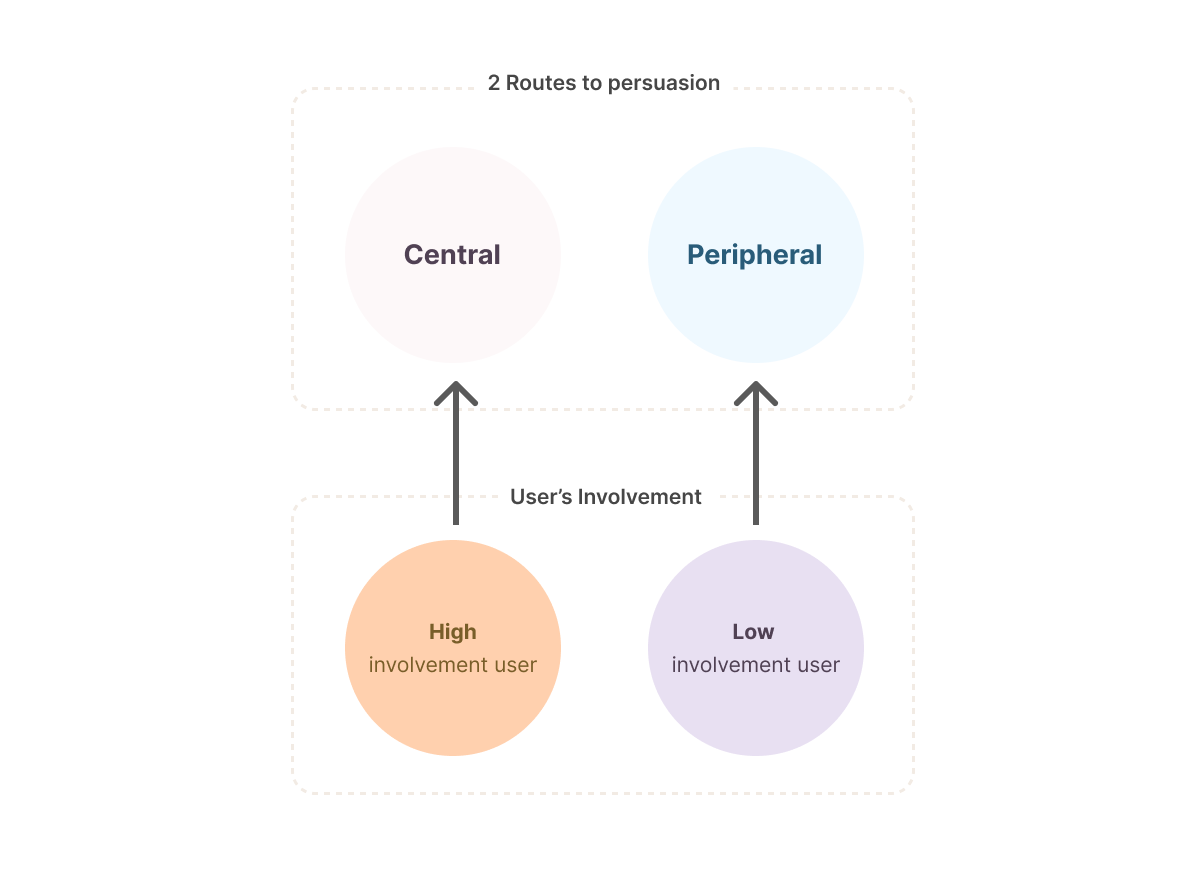

2 Approaches of persuasion: Heuristic vs. Systematic

Dual information processing theory

In the psychology and marketing field, there is an explanation of how a person process information in two different ways, which is normally called ‘Dual information theory’. (E.G., ‘Elaboration likelihood model: ELM’, and ‘Heuristic & systematic information processing model: HSM’)

*in this document, I’ll just use terms from Elaboration Likelihood Model

Central Route

If the user thinks that the matter is important, he knows much information, and he feels he is involved deeply in the matter (= high involvement) -> he will make a decision carefully and thoughtfully in a logical way.

Peripheral Route

If the user thinks that the matter is not important, he knows little information, and he feels he’s not involved deeply in the matter (=low involvement) -> he will make a decision quickly and roughly in a heuristic way.

Then, which approach should be taken for us?

Many marketing strategies or advertisements take an approach according to the target user’s expected involvement in their product. Sometimes, there is a set of the high-involvement product group and a low-involvement product group separation, and the group category is referred to for marketing strategy such as advertisement.

So, ideally, it better be decided by the high/low involvement group category.

But, content-watching is not a product that we can easily presume to fall into the high or low-involvement category. It takes some time and money in daily life, and it might represent their cultural taste, so somebody can think it’s important, while others don’t think it’s important. I thought It will be individually different.

So we better do hands-on research to check what degree of involvement our participants have, and then also check what degree of satisfaction they are having.

Then, what do we do?: Survey

Through a survey, we can get levels of each individual’s involvement and satisfaction levels with the current version of the recommendation.

And, we can see if there is a relationship between the level of involvement and satisfaction. Then we might separate high/low involvement groups among them, and according to their level of satisfaction, we can see which involvement group is having trouble. Then we can take an approach that is proper for the group.

For example, possible scenarios are as below:

1. If all participants are satisfied with the current ver. of recommendation, maybe we better consider not proceeding.

2. If all participants are not satisfied with the current ver. of recommendation, that means involvement doesn’t affect satisfaction level. So maybe we can proceed with the project, but we can’t make use of a persuasion strategy direction.

3. If some level of involvement group shows high satisfaction, we can exclude the corresponding persuasion strategy for the involvement group.

User Research

User Research Goal

1. To check if there’s a relation between involvement and level of satisfaction.

2. To find users’ pain points & unmet needs on TV recommendation UX in detail

Recruitment

Participants are recruited by online application form which is distributed by internal company email. The mail summarized rough information on the research topic, goal, expected time, condition of the applicant, and what they would get rewarded (cafe drink coupons).

The application form had questionnaires about 1) participants’ variables which might affect to biased results such as profession, current service they use, familiarity with AI assistant, 2) the level of satisfaction they feel from a recommendation, and 3) content-watching involvement.

Procedure

Survey: 14 survey answers came through an interview application form, and

Interview: 5 applicants are selected in consideration of their responses.

Procedure 1: Survey

-

When I watch a new program or VOD, I look up a lot of information and make a careful decision.

When I watch a new program or VOD, I search a lot for alternatives to see if this is the best option.

Choosing what to watch on TV is very important to me

After choosing what to watch on TV, it doesn't matter to me if I feel like I made the wrong choice.*

It doesn't matter to me what I choose to watch on TV*

When it comes to choosing what to watch on TV, it's hard to make the wrong choice*

It's hard to say that I like to choose what to watch on TV.

I can tell what someone is like based on what they see

-

Recommendation from TV service is an enlightening experience.

Recommendation from TV service is unpleasant (reverse).

Survey Result

14 participants applied in the online application form with a survey

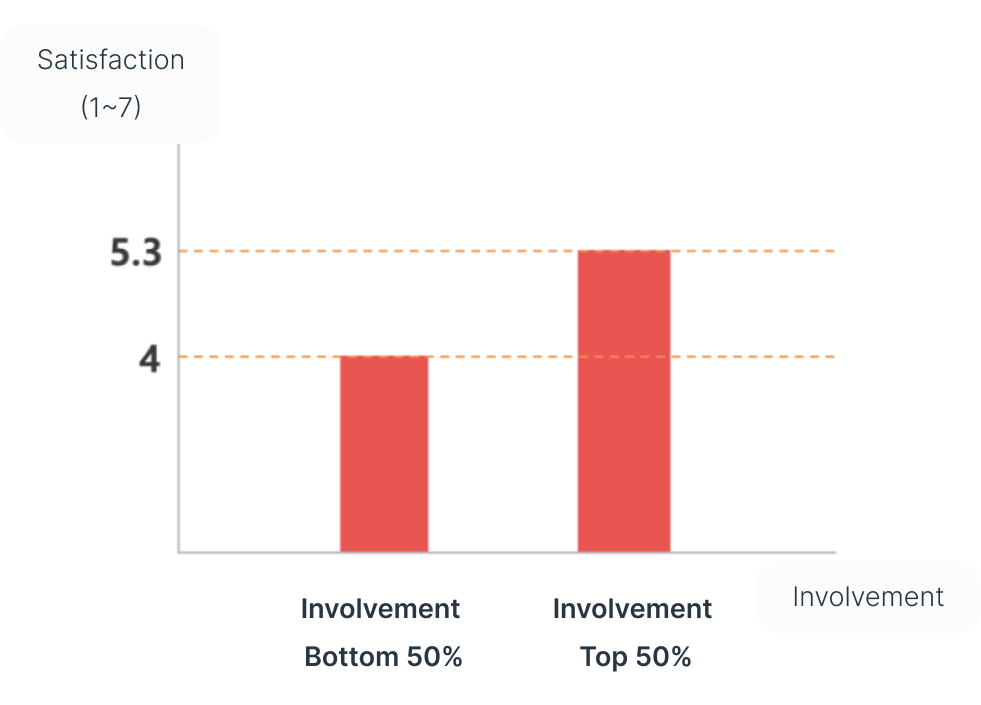

The more they care about content watching, the more they are satisfied with the current content recommendations.

The satisfaction level is measured on a Likert scale: 1 (very low) to 7 (very high).

The top 50% involvement group showed 5.3 of satisfaction, while the bottom 50% group showed 4. It looks top 50% group people are satisfied, while the bottom group people felt neutral, not high but also not bad.

The level of ‘satisfaction on recommendation’ is in proportion to the level of ‘involvement in content watching.

In other words, for the people who think content watching sensitively, a recommendation is working well already, while for the people who think content watching not sensitively, a recommendation is not good, but also not bad.

It’s assumed that a high-involvement group can clearly differentiate what content they like. And if they see the result is what they like, they think it’s good. If the recommendation result is not what they like, they won’t be satisfied.

On contrary, it’s assumed that a low-involvement group is not sure whether the recommendation is made well or not. Probably, because of it, the graph above also shows this group has a neutral satisfaction level. With only results, they are not sure if the results were good or bad.

Our survey shows high-involvement group showed a higher satisfaction level (5.3 out of 7). This means the recommendation algorithm is working well.

But low-involvement group showed a lower satisfaction level (4 out of 7). It’s assumed that because the low-involvement group doesn’t know clearly what they like, they can’t be satisfied with recommendations however it is well made.

So, we could see that high-involvement group people are already satisfied, so we better focus on lower-involvement group people.

So, what will make the low-involvement group think the recommendation is good is to give them a heuristic cue or a clear sign that the recommendation is what they like. So the users are assured that it’s a valid & correct recommendation.

Interpretation

&

Implication

Based on ‘procedure 1: Survey’, we could narrow the target group. It’s a low-involvement group. So interviews were done with 5 interviewees who were in the low-involvement group,

Each interview took 40min~1 hour.

It was 1 on 1 in-depth interview and half-structured.

Questionnaires were mostly about overall Usage & Attitude, and important factors for choosing content.

Procedure 2: Interview

I think TV is ‘OOO

I think AI assistant is ‘OOO’’

I think TV content recommendation is ‘OOO’

When do you make VOD purchases?

What will make you use TV more?

What’s content that fits for me?

Have you seen any recommendation in mobile recently?

Do you think the recommendation comes from AI? or TV?

How do you choose content to watch on Netflix?

What’s important when you get content recommendation?

How would you like to get recommendation from TV?

Questionnaires

A printed summary showing all interviews

Content recommendation is useful because it can save time, and find what they want, while sometimes it looks like a promotion or advertisement.

VOD watching is more than just relaxation, it’s time to get away from stress proactively.

Concerns of losing control, keeping watching for a long time

Thinking about what to watch is bothersome

Just recommendation list looks like a promotion, so it’s not satisfactory

Long playtime is burdensome because of opportunity cost

Have no clue what to watch if it’s in a big universe.

Interview Summary

Interview scripts summaries (kr)

Ideation

Now our main target is the low-involvement group. So, we need a ‘Heuristic’ approach to persuade them.

4 Ideas are made in the heuristic approach based on the interview

Idea #1

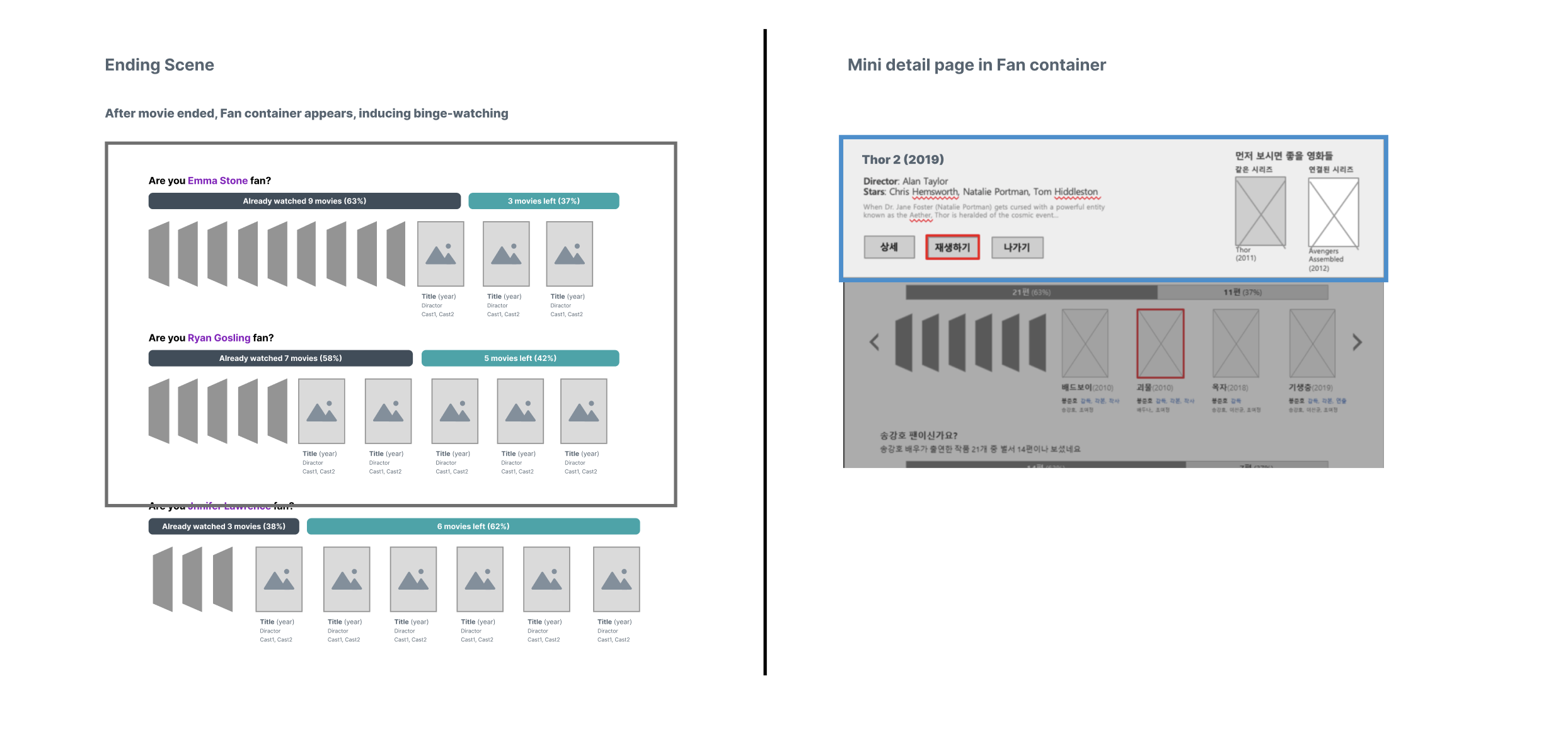

1. You were their fan

“Just the recommendation list looks like a promotion, so it’s unsatisfactory” (one of the interview summary point) Then let’s show why they are related to you. But how?! Applying the ‘Goal-gradient effect’ & ‘Labeling effect’ to induce people to watch more movies.

People realize they were somebody’s fan (labeling effect), and complete a to-watch list (goal-gradient effect)

Labeling effect

‘Labeling effect’ means, self-identity and the behavior of individuals may be determined or influenced by the terms used to describe or classify them.

Psychological Concept 1:

Goal-gradient effect

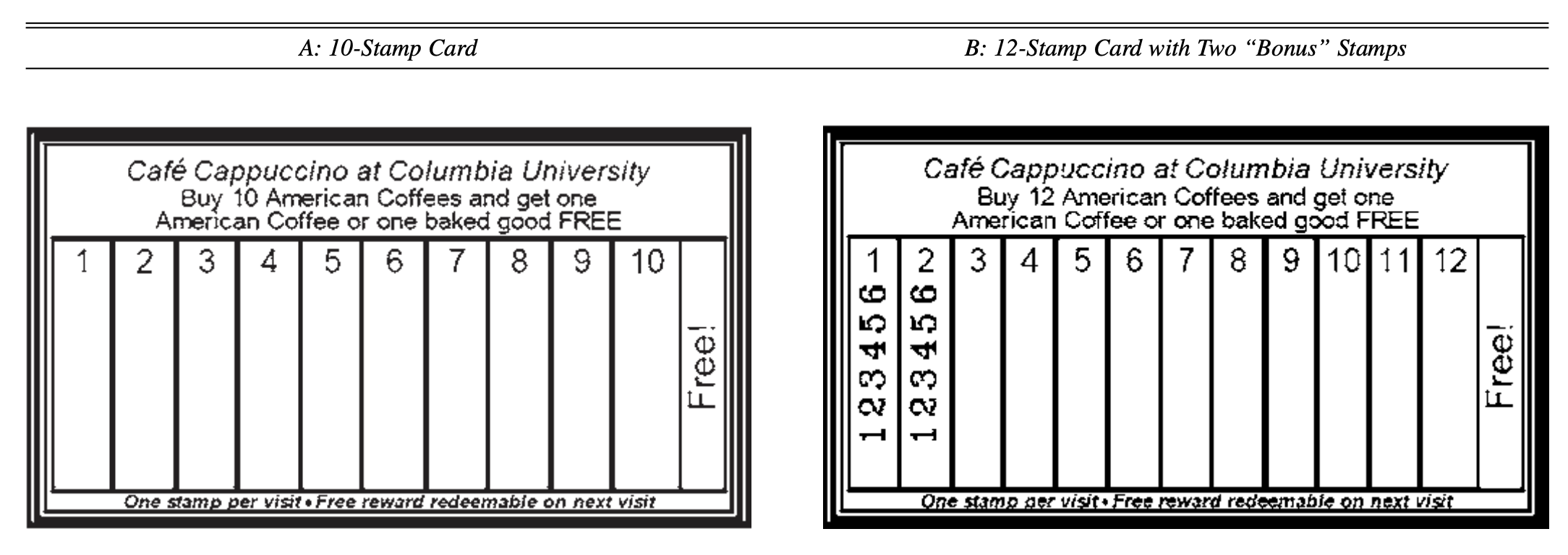

Humans are more likely to increase their effort when they are closer to their goals.

The goal-gradient effect declares that the tendency to achieve a goal increases with proximity to the goal, as originally proposed by Hull (1932) through an experiment with rats, running for food in a maze. And there have been many pieces of research demonstrating it’s applied the same to humans. A famous example of using this effect in real life is a cafe coupon. It provides fr coffee when a user fills in all stamps, but the coupon starts from 2 or 3 stamps as default.

Psychological concept 2:

*For more explanation, please take a look at this page:

- Short explanation: https://lawsofux.com/goal-gradient-effect/

- Original academic research: https://journals.sagepub.com/doi/abs/10.1509/jmkr.43.1.39?journalCode=mrja

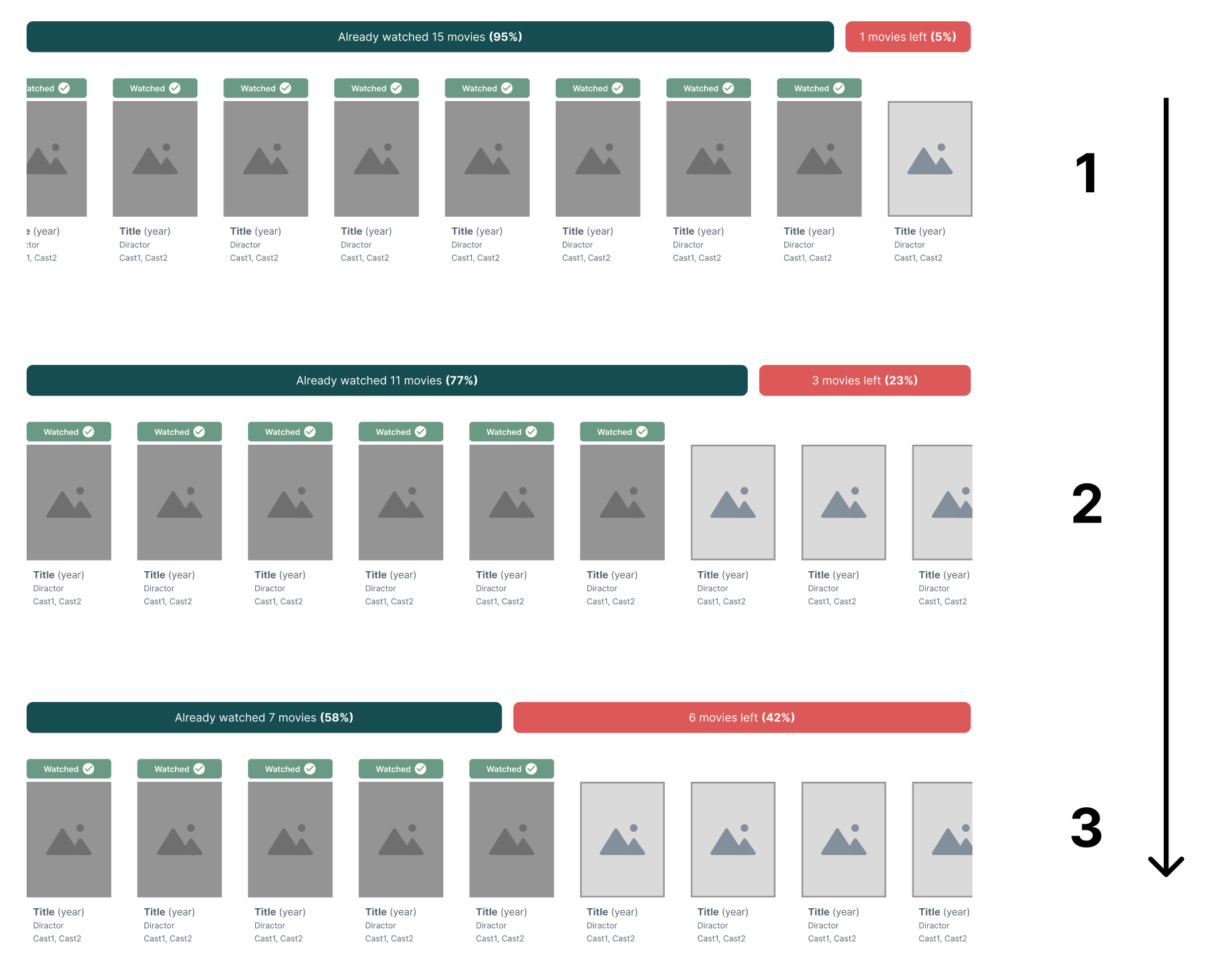

Design proposal

Keep watching, and feel achievement

1. With numeric information of how many movies of a specific director/actor that the user has watched, the user would feel achievement, and will try to complete it.

2. Choose a movie to watch next more easily referring to watching history. This will make less decision fatigue, inducing a more positive attitude toward the service.

Expected effect

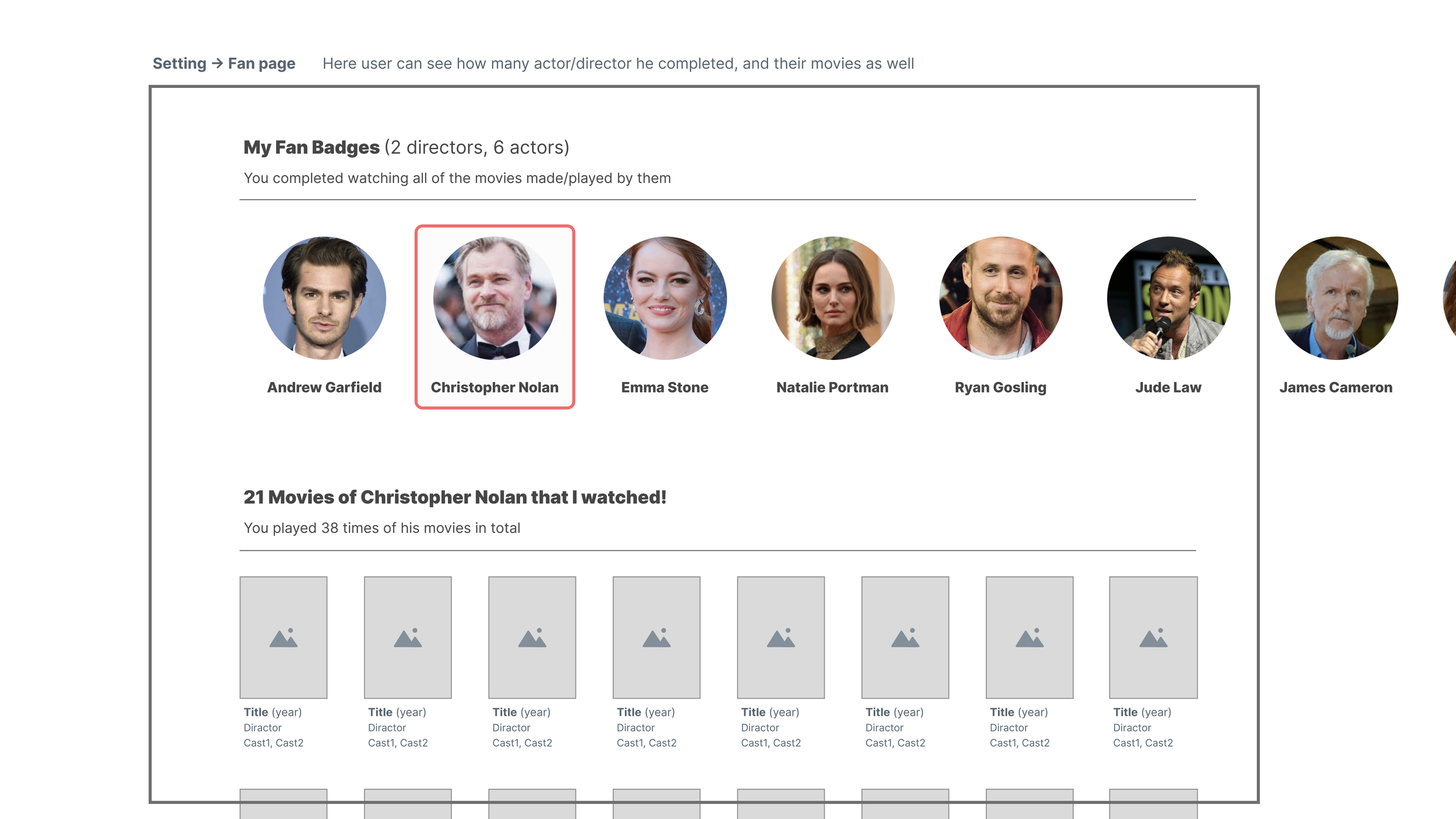

Display how many movies which are made by specific actor/director user has watched

Grant a fan badge if user watched all movie made by an actor/director

Feature

Minimum number of movie to make it as a fan list: 3

A list which has the least number of movie to watch comes to the top

After a movie ended, fan list comes, so naturally induce a ‘Binge-watching’. If user selects a movie in the list, ‘Mini detail page’ appears instead of normal ‘full detail page’, so user feel less burden to check the detail page.

Idea #2

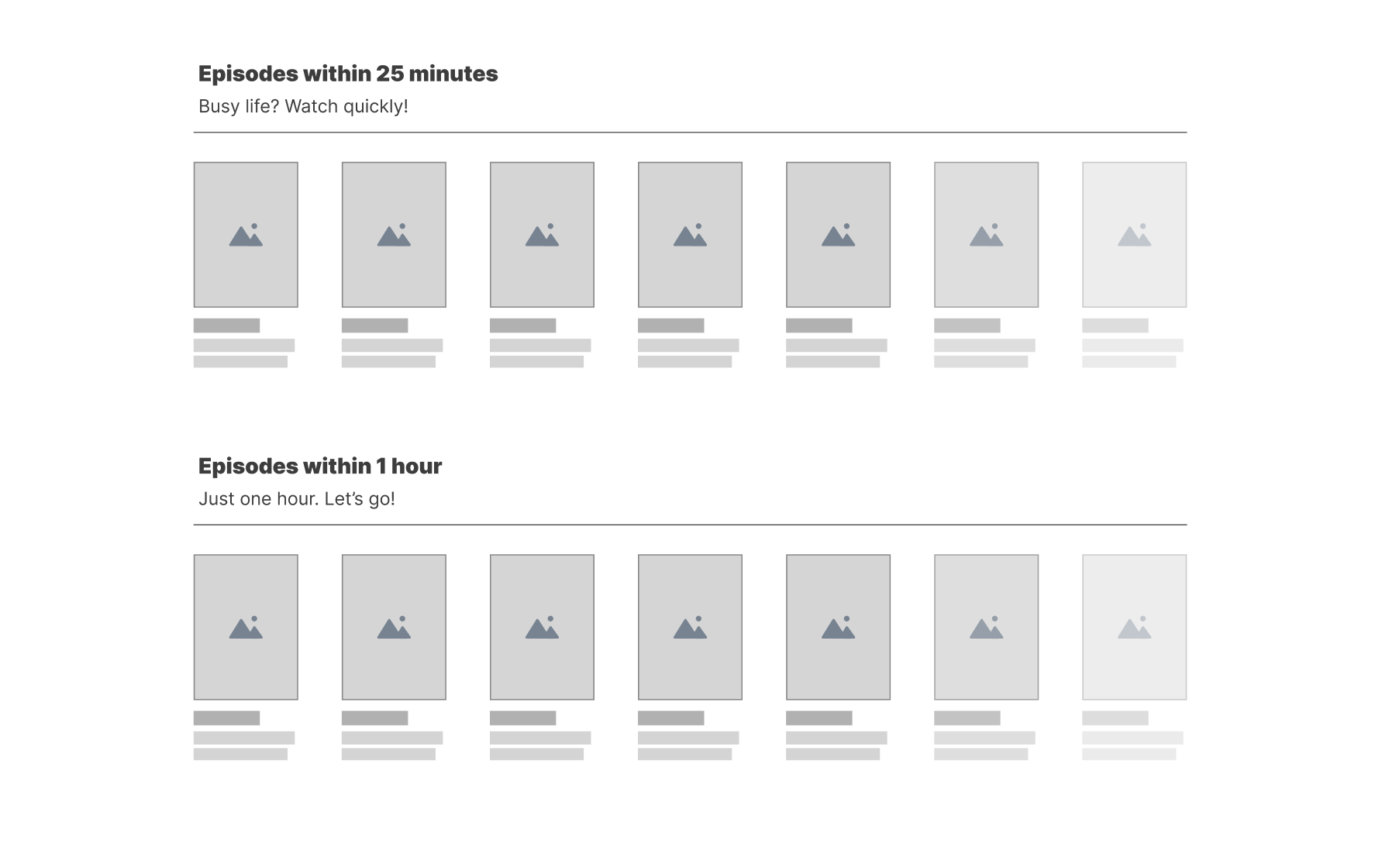

2. Within N minute

“Thinking about what to watch is bothersome”

“Long playtime is burdensome because of opportunity cost”

Feel safe, watch comfortably

The anxiety of making the wrong choice makes users prefer watching short content. And, concern about watching movies for a long time at a late night makes users hesitate to watch anything.

User knows the content will end in N minutes, so don’t have to turn it off before the content ends, and don’t have to be attentive for time. It’ll make user feel less burden to start watching.

People who were concerned by themselves from watching too long will feel free to watch, so it’ll lead more frequent watching in the end

Expected Effect

Contnts which have N minutes are sorted in a list, and are provided

It can be episodes of series, or a movie which has N minutes left from last watching

User may set N minutes

Features

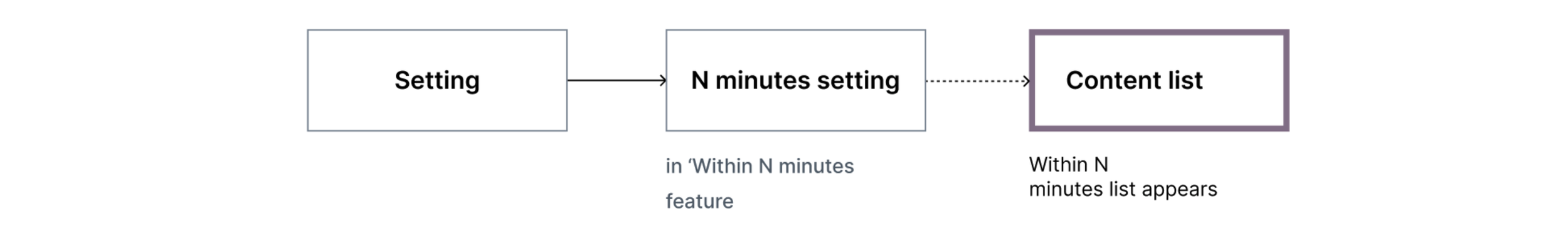

Flow

Idea #3

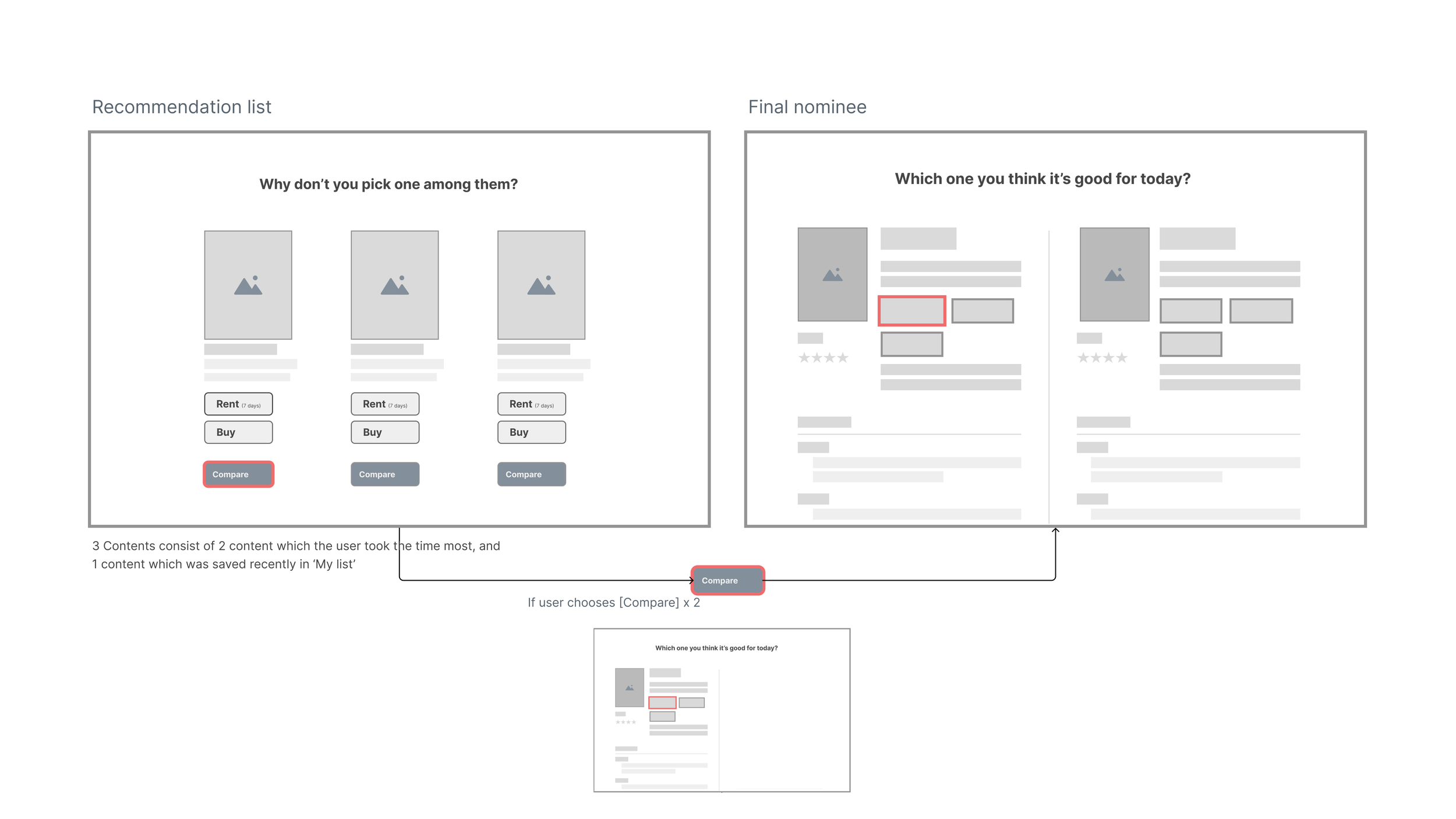

3. Push for hesitant user

“Thinking about what to watch is bothersome”

“Just recommendation list looks like a promotion, so it’s not satisfactory”

User gets tired, and doesn’t purchase any.

The longer time a user spends time choosing what to watch, the less user would purchase movies.

Keep searching for 10 minutes?? 30 minutes? 1 hour?

Can’t decide what to watch? Nobody push to you watch something?

Then we’ll push you!

User gets tired, and doesn’t purchase any. The longer time user spend time choosing what to watch, the more user gets exhausted, and then less user would purchase movie.

Expected problem

Idea

If user keeps searching for N minutes, system shows 2 or 3 recommendation list

Time left is displayed in real time in integer

Idea #4

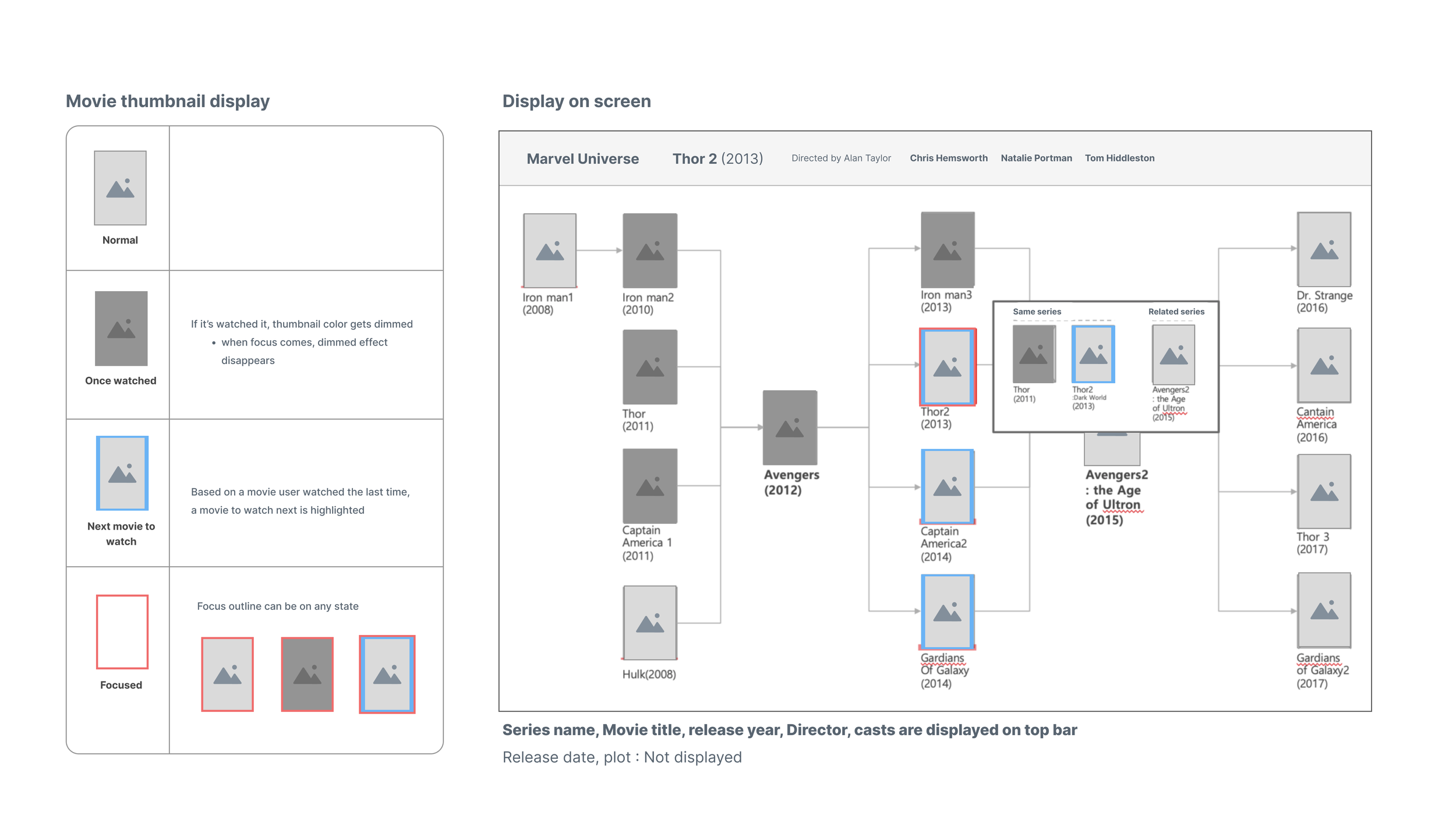

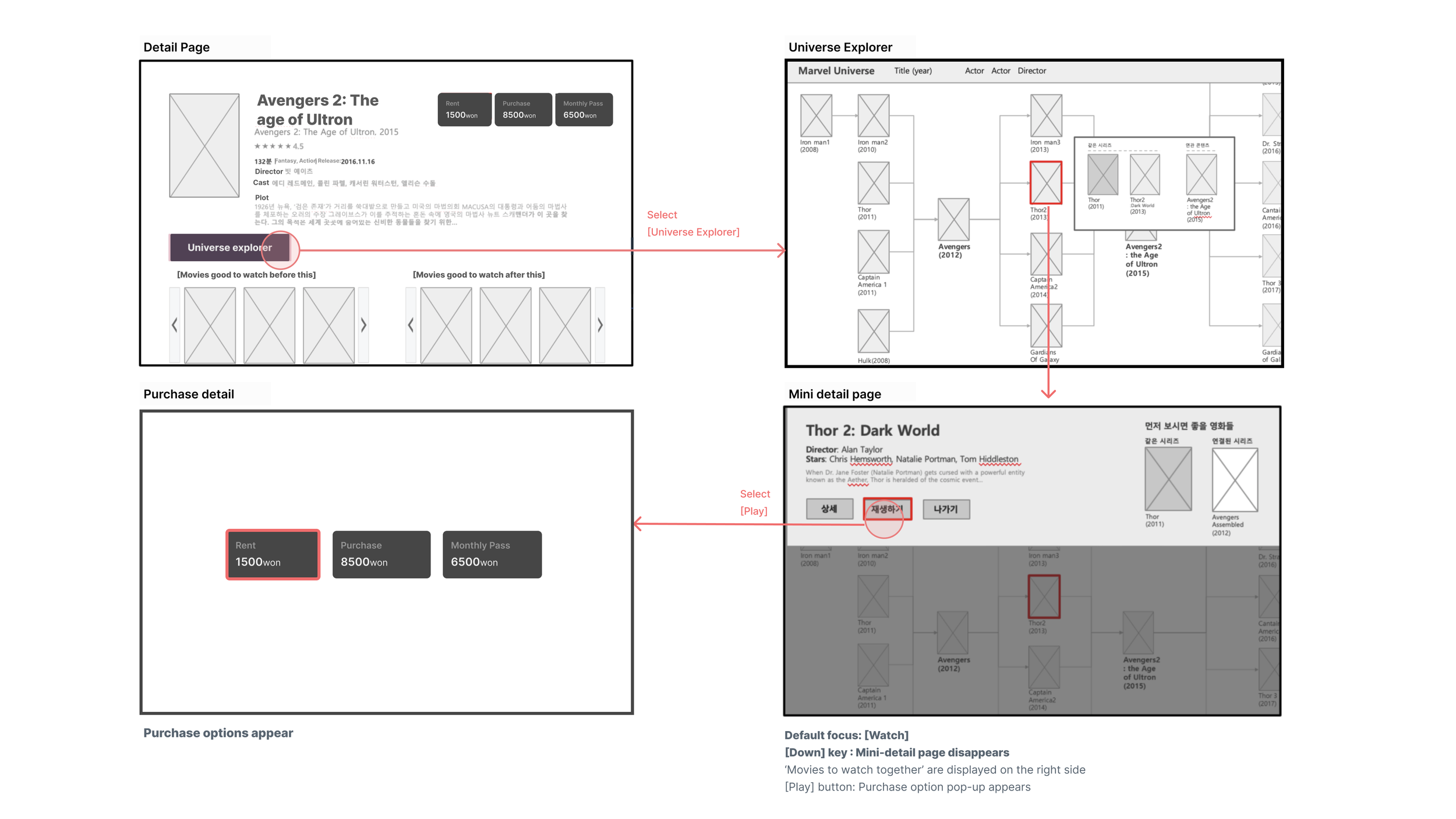

4. Universe Explorer

Marvel Universe... DC Universe...

Many universes movies are getting released, mixed with other movies in ‘their UNIVERSE’. And people want to understand what’s what... or people want to watch related movies before watching newly released one.

So here’s design suggestion to explore UNIVERSE.

Afraid to watch a movie in a big Universe

User who has not been tracking a movies in a ‘Universe’ feels afraid to watch any movie because it’s related to past movies in the ‘Universe’. They don’t even know where to start.

Pain Point:

Check the map, understand it, and watch it

With tree-structured map, user can easily understand the hierarchy of series, so they decide where to start watching, what to ignore, what to watch more. Understanding will bring them a confidence, and user can decide themselves what to do.

Expected Effect:

Flow

Thoughts after project

PROS

Well-mixed with user interviews and solutions with psychological principles.

Having an academic knowledge-based view: Thinking of recommendation as persuasion enabled to think solutions confidently.

Project Initiative: I could go through how to make a project in a company, persuading people with proper plans and reasons.

CONS

Missing the test/iteration process: It happened due to the lack of time/human resources at that time. The other projects from clients were waiting. So right after the concept presentation, this project couldn’t go further with the iterative tests.

Next time if I have another chance to take a new concept development project, I’ll try to have a test period essentially, so we can check the exact value of the idea & solution with proof.